Previously, we discussed the importance of a well-designed scorecard in our blog, Benefits of a Balanced Scorecard for Performance Management. We continue our discussion by examining what qualifies as sound design and what to consider while creating a balanced scorecard.

Striking the perfect balance in a scorecard is essential for maximizing its effectiveness as a robust performance management tool. By avoiding too much complexity or oversimplification, you can avoid potential confusion, misguided focus and undesired actions. Let’s explore how to achieve harmony and stimulate the ideal behaviors across your customer experience (CX) operations.

1. Statement of Direction

Every organization has a statement of direction, motto or saying that indicates its core values and beliefs. Remember the statement as you create your scorecards because it acts as a set of organizational guidelines.

When designing a balanced scorecard, include the metrics related to your core values and ensure they are weighted appropriately.

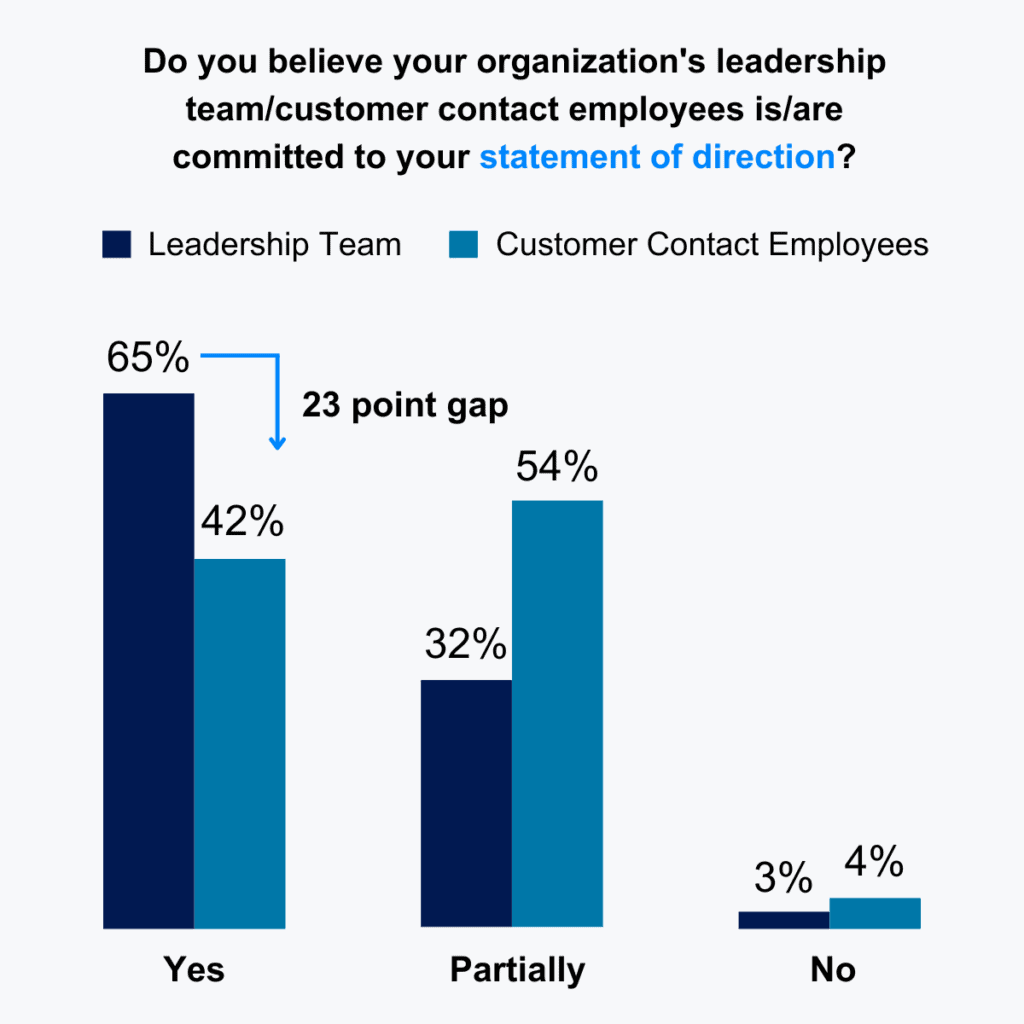

Organizations frequently have a gap between leadership and employee perception of alignment with their statement of direction. Supporting that statement with a scorecard helps align these views.

For example, if your goal is to be a low-cost outsourcer, efficiency metrics like average handle time (AHT) should be at the forefront of your scorecards. For a well-known brand striving to maintain high customer satisfaction, issue resolution and quality assurance should be the primary focus. If you manage a sales organization, your scorecards wouldn’t be complete without conversion metrics.

2. Program and Site Scorecard Comprehensiveness

Organizations should manage various metrics and key performance indicators (KPIs) across all categories, such as quality, service, efficiency, cost and customer experience. Aside from those, consider additional measures of operational success by engaging internal stakeholders to understand other metrics that matter to them.

Scorecards should have views into performance over time compared to targets. There also needs to be a mechanism to determine which metrics consistently meet targets and show sustained improvement. You should be able to view performance at the individual metric level and by the measured category (e.g., quality, service, etc.).

3. Agent-Level Scorecards

Keep agent-level scorecards focused on agent-level metrics. Separating the metrics allows organizations to understand which sites or programs perform better than others. Viewing performance separately can help uncover both areas of opportunity and best practices. Nothing causes motivation to evaporate more quickly than holding staff accountable for things outside their control.

For instance, service level may be necessary for your organization, but giving individual feedback on performance versus your service level goals would be less meaningful. Here, we mix metrics. We begin with the idea that speed of service is essential and end up with schedule adherence or conformance as the right metric to include.

Even utilization may be out of an agent’s hands. For example, if an agent is scheduled for more coaching or training time, they should not be penalized for the utilization impact of that unproductive time.

Determining the root behaviors that lead to program-level performance can help you choose the right metrics. Instead of customer satisfaction (CSAT) as an agent metric, you may find that issue resolution, quality performance, or tool utilization are more controllable by an agent and drive the desired outcome.

Course

Master operational excellence in your contact center and digital services to enhance customer satisfaction, drive sales, and reduce costs with COPC® Best Practices for Customer Experience Operations course.

4. Balancing Complexity

Revamping a scorecard can be an exciting opportunity for organizations to boost performance visibility and adoption. However, intricate design can thwart achieving these goals, making it crucial to prioritize simplicity and user-friendliness.

Consider that complex calculations cause staff to lose confidence in the scorecard tool due to unexpected or unfair results. But also bear in mind that with too few metrics, staff may find creative ways to reach objectives that have unintended consequences. There could also be overemphasizing specific metrics to the detriment of others (e.g., emphasizing increasingly lower performance in AHT could drive unwanted behaviors elsewhere). To ensure you capture enough of the right information, do the following:

a. Clearly define the metrics and calculations and make them easily accessible (preferably within the scorecard tool). You can set up definitions via simple hyperlinks to a definition document or a tooltip that activates when the cursor is over the metric.

b. Include all metrics in the scorecard and provide the tools necessary for the user to manage those metrics. For example, the scorecard could have a view that gives a stack ranking of results based on the population being compared (i.e., agent, team, program, site, etc.) and another view that provides insight into trend-based performance for each metric depending on the category it falls under.

c. Make liberal use of “banded” targets where performance is found within an upper and lower limit. Banded targets make the most sense with metrics like AHT or escalation/transfer rate. Performance outside these bands is considered not achieving the desired target.

5. Test Your Scorecard

A lot goes into adequately deploying and using a scorecard; we will discuss deployment in our next post. Before investing time, effort and money, run the new scorecard through some tests. There are three main types of tests — the best practice is to complete the following:

a. Seek fresh perspectives for unbiased feedback and insights by consulting individuals uninvolved in the scorecard’s creation. The more diversity in departments and levels within the organization, the better. Getting tunnel vision after working on a scorecard for days, weeks, or months is easy.

Outside perspectives should include usability testing. Is it easy to operate? Is the user interface (UI) intuitive? Are any links broken? Is an expected feature or metric missing? If using a cloud-based scorecard, are the latency and responsiveness acceptable?

b. Test the logic on past performance. You likely have the metric results for recent months, plug that data into your new scorecard and see what it tells you. Does the scorecard accurately reflect high and low performers? If not, does the scorecard need reworking, or do you need a culture shift?

For instance, if AHT alone made someone successful, a balanced scorecard may change who appears to be doing well. It also could mean that there were favored agents who management thought were doing well but were underperforming. Having more objective measures may change management’s views of employees and the culture.

At a minimum, the data can tell you if the change will unsettle some staff. It may also highlight an area that you overlooked during development. Fixing the issue now will be much easier than fixing it after deployment.

c. The third and final test is a stress test. Enter extreme performance into your scorecard. What if an agent escalates 100% of their work? What if they have calls or chats that take 300% of the expected length? Can good performance in other areas offset these deficits? If there is a qualitative description of the performance, does it still fit? If you find problems, rework your scorecard and retest. Again, fixing the issue now will be much easier than fixing it after deployment.

These are some of the most important things to consider when creating scorecards. By taking to heart these five considerations, you will almost certainly drive high levels of adoption, resulting in higher performance levels. With a well-designed scorecard, leadership and staff will manage the appropriate metrics and be confident in the accuracy of the data.